Latest Content

Featured Article

Article

The Family in Ancient Mesopotamia

Family in ancient Mesopotamia was considered the essential unit that provided social stability in the present, maintained traditions of the past, and...

Featured Image

Image

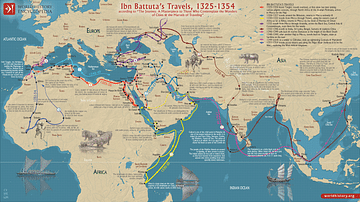

Ibn Battuta’s Travels, 1325-1354

A map illustrating Ibn Battuta's (Abū Abd Allāh Muḥammad ibn Abd Allāh Al-Lawātī, 1304 – c.1368) series of extraordinary journeys across the Islamic...

Free for the World, Supported by You

World History Encyclopedia is a non-profit organization. For only $5 per month you can become a member and support our mission to engage people with cultural heritage and to improve history education worldwide.

Become a Member Donate

Definition

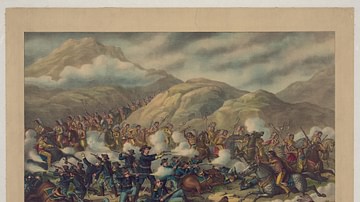

Great Sioux War

The Great Sioux War (also given as the Black Hills War, 1876-1877) was a military conflict between the allied forces of the Lakota Sioux/Northern Cheyenne...

Collection

Kingdoms and Periods of Ancient Egypt

The kingdoms and periods of ancient Egypt are modern-day designations for the history of the civilization. The people themselves did not refer to these...

Collection

Aztec Religion & Culture

Aztec religion and culture flourished between c. 1345 and 1521 and, at its height, influenced the majority of the people of northern Mesoamerica. Great...

Article

Black Elk on the Battle of the Little Bighorn

Black Elk (l. 1863-1950) of the Oglala Lakota Sioux was twelve years old at the Battle of the Little Bighorn on 25 June 1876. He gives his account of...

Article

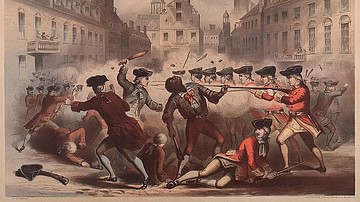

African Americans in the American Revolution

On the eve of the American Revolution (1765-1789), the Thirteen Colonies had a population of roughly 2.1 million people. Around 500,000 of these were...

Collection

Plato's Life & Influence

The Greek philosopher Plato (l. 424/423 to 348/347 BCE) is recognized as the founder of Western philosophy, following his mentor, Socrates. He founded...

Definition

Battle of Britain

The Battle of Britain, dated 10 July to 31 October by the UK Air Ministry, was an air battle between the German Luftwaffe and British Royal Air Force...

Definition

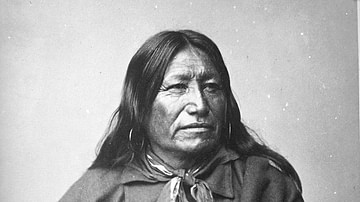

Sioux Chief Spotted Tail (Eastman's Biography)

Spotted Tail (Sinte Galeska, l. 1823-1881) was a Brule Lakota Sioux chief best known for choosing diplomacy over military conflict in dealing with the...

Article

Battle of Guilford Court House

The Battle of Guilford Court House (15 March 1781) was one of the last major engagements of the American Revolutionary War (1775-1783). Fought near...

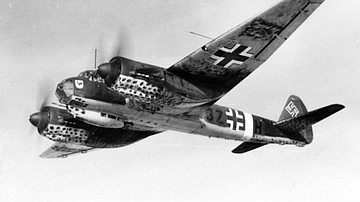

Definition

Junkers Ju 88

The Junkers Ju 88 was a two-engined medium bomber plane used by the German Air Force (Luftwaffe) throughout the Second World War (1939-45). Ju 88s were...

Article

Sioux Life Lessons: Iktomi and the Muskrat & Iktomi's Blanket

The Sioux stories known as Iktomi tales concern the trickster figure Iktomi (also known as Unktomi) who appears, variously, as a hero, sage, villain...

Article

Iktomi Tales

Iktomi (also known as Unktomi) is a trickster figure of the lore of the Lakota Sioux nation similar to tricksters of other nations, such as Wihio of...

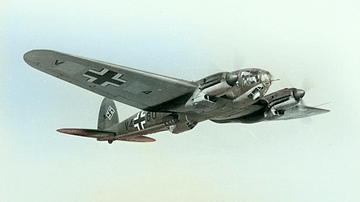

Definition

Heinkel He 111

The Heinkel He 111 was a medium two-engined bomber plane used by the German Air Force (Luftwaffe) during the Second World War (1939-45). Heinkel He...

Image Gallery

A Gallery of Maya Cities

The Maya Civilization flourished between 250-950 although it drew upon earlier civilizations such as that of the Olmecs (1500 - 200 BCE) and Zapotec...